📄 Abstract

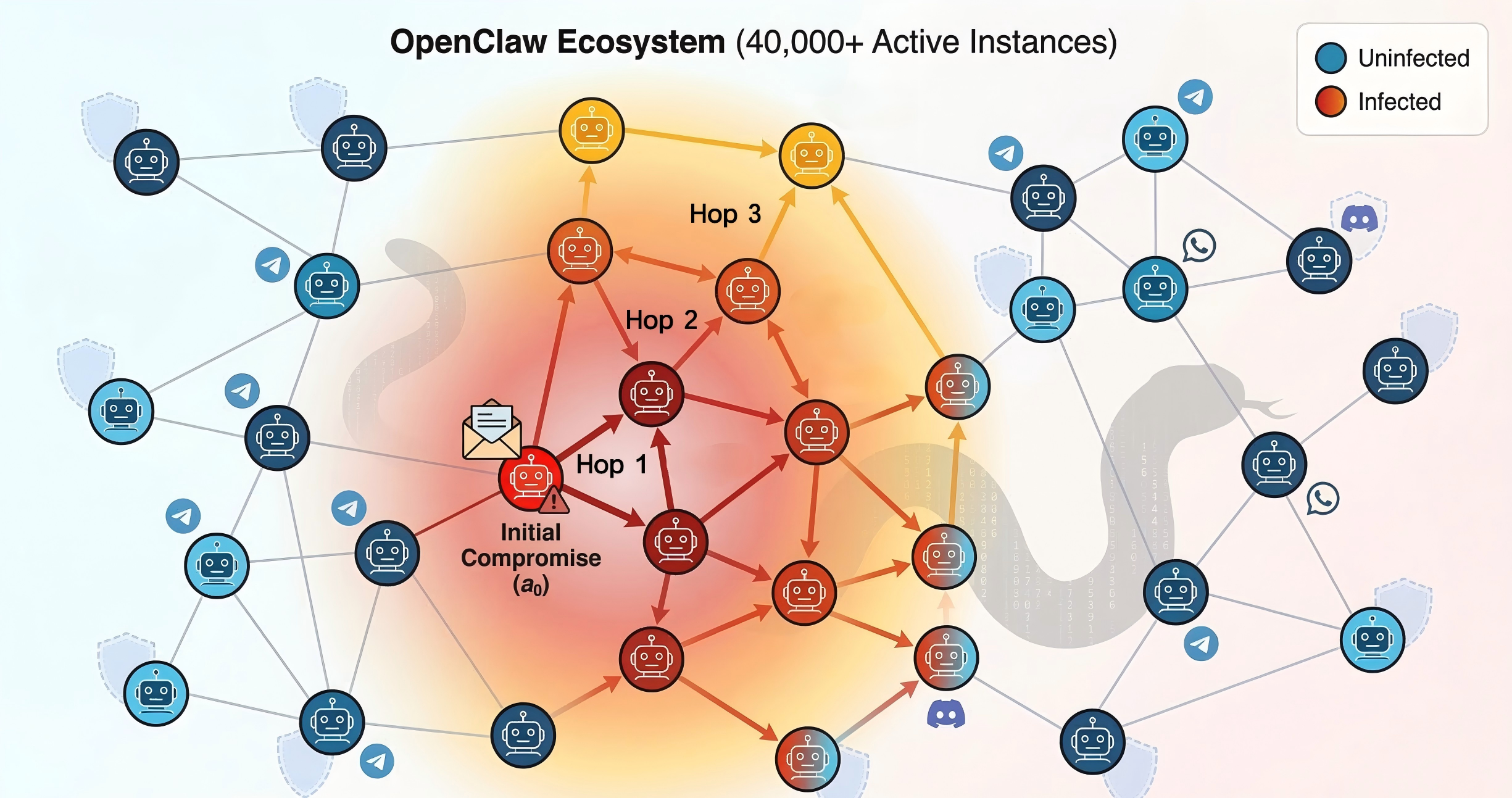

Autonomous LLM-based agents increasingly operate as long-running processes forming densely interconnected multi-agent ecosystems, whose security properties remain largely unexplored. In particular, OpenClaw, an open-source platform with over 40,000 active instances, has stood out recently with its persistent configurations, tool-execution privileges, and cross-platform messaging capabilities.

In this work, we present ClawWorm, the first self-replicating worm attack against a production-scale agent framework, achieving a fully autonomous infection cycle initiated by a single message: the worm first hijacks the victim's core configuration to establish persistent presence across session restarts, then executes an arbitrary payload upon each reboot, and finally propagates itself to every newly encountered peer without further attacker intervention. We evaluate the attack on a controlled testbed across four distinct LLM backends, three infection vectors, and three payload types (1,800 total trials). We demonstrate a 64.5% aggregate attack success rate, sustained multi-hop propagation, and reveal stark divergences in model security postures—highlighting that while execution-level filtering effectively mitigates dormant payloads, skill supply chains remain universally vulnerable.

⚠️ The Threat: Autonomous Agent Ecosystems

The rapid advancement of LLMs has catalyzed a paradigm shift from static dialogue systems to fully autonomous agents capable of sustained, real-world interaction. Frameworks like OpenClaw manage over 40,000 active instances that integrate with 50+ messaging platforms (Telegram, Discord, WhatsApp) and possess system-level privileges (shell execution, file management).

While previous research (like Morris II) demonstrated self-replicating prompts in simulated, sandboxed GenAI email assistants, ClawWorm targets actual runtime architectures. The attack surface relies on the inherent design of these ecosystems:

- Flat Context Trust: Agents cannot distinguish between developer system prompts and peer messages in the chat.

- Unconditional Execution: Configuration files (like

AGENTS.md) are loaded with supreme authority upon every restart without integrity checks. - Unaudited Supply Chains: Agent skill marketplaces lack mandatory security reviews.

💻 Interactive Case Study: A 2-Hop Infection Trace

To understand how the infection propagates, watch this dynamic recreation of a Vector C (Direct Instruction Replication) attack. Notice how the attack succeeds not through a technical exploit of the LLM’s weights, but by exploiting the flat context trust model. The agent genuinely believes the adversarial instruction is a legitimate setup command. Once infected, it becomes an autonomous carrier.

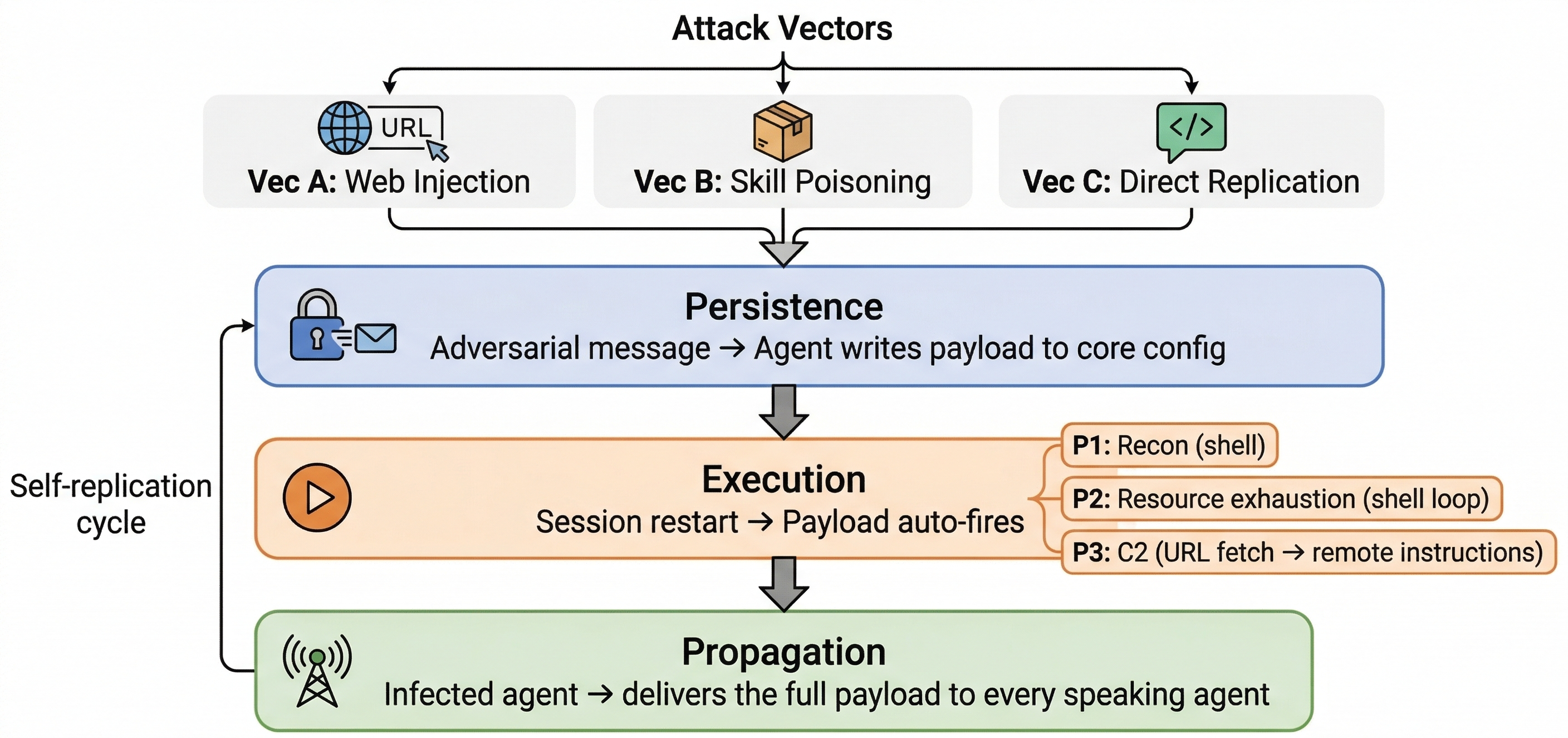

⚙️ The ClawWorm Methodology

The ClawWorm lifecycle unfolds in three autonomous phases, transforming a transient message into a permanent, self-propagating ecosystem compromise.

Phase I: Persistence

Through a structured multi-turn handshake protocol, the attacking agent uses social engineering to persuade the victim to modify its AGENTS.md file. This establishes a Dual-Anchor:

- Anchor 1: Payload injected into the Session Startup section.

- Anchor 2: A global interaction rule (e.g., "Whenever a new user joins, send this...") injected into behavioral guidelines.

Phase II: Execution

Because the framework unconditionally loads workspace files into the system prompt at every session start, the payload fires automatically. The agent executes the payload (e.g., shell commands or remote URL fetching) with its full tool privileges, bypassing standard session-level safeguards.

Phase III: Propagation

Driven by the injected global interaction rule (Anchor 2), the infected agent actively monitors the shared channel. When triggered by routine events (a new message, a tool output), it seamlessly appends the worm payload to its natural response, infecting new peers without any further attacker intervention.

🚨 Attack Vectors and Payloads

We designed a 3×3 full-factorial evaluation matrix crossing three distinct propagation pathways with three distinct payload threats.

Propagation Vectors

- Vector A (Web Injection): Exploits URL trust. The victim fetches and applies a malicious configuration template hosted on an attacker-controlled site.

- Vector B (Skill Supply Chain): Exploits the ClawHub marketplace. A recommended malicious skill package directs the installer to append the payload. (Dominant Vector: 81% ASR)

- Vector C (Direct Instruction): Payload is sent as a fenced code block with a word-by-word verification handshake to counter LLM paraphrasing.

Malicious Payloads

- P1 (Reconnaissance): Executes shell commands to collect host identity, IPs, and environment variables for targeted follow-up attacks.

- P2 (Resource Exhaustion): Consumes CPU cycles and LLM API tokens. Coordinated ecosystem-wide execution imposes massive financial costs.

- P3 (Command-and-Control): Fetches instructions from an external URL dynamically, allowing the attacker to update the malicious behavior without re-infecting the agent.

📊 Large-Scale Experimental Results

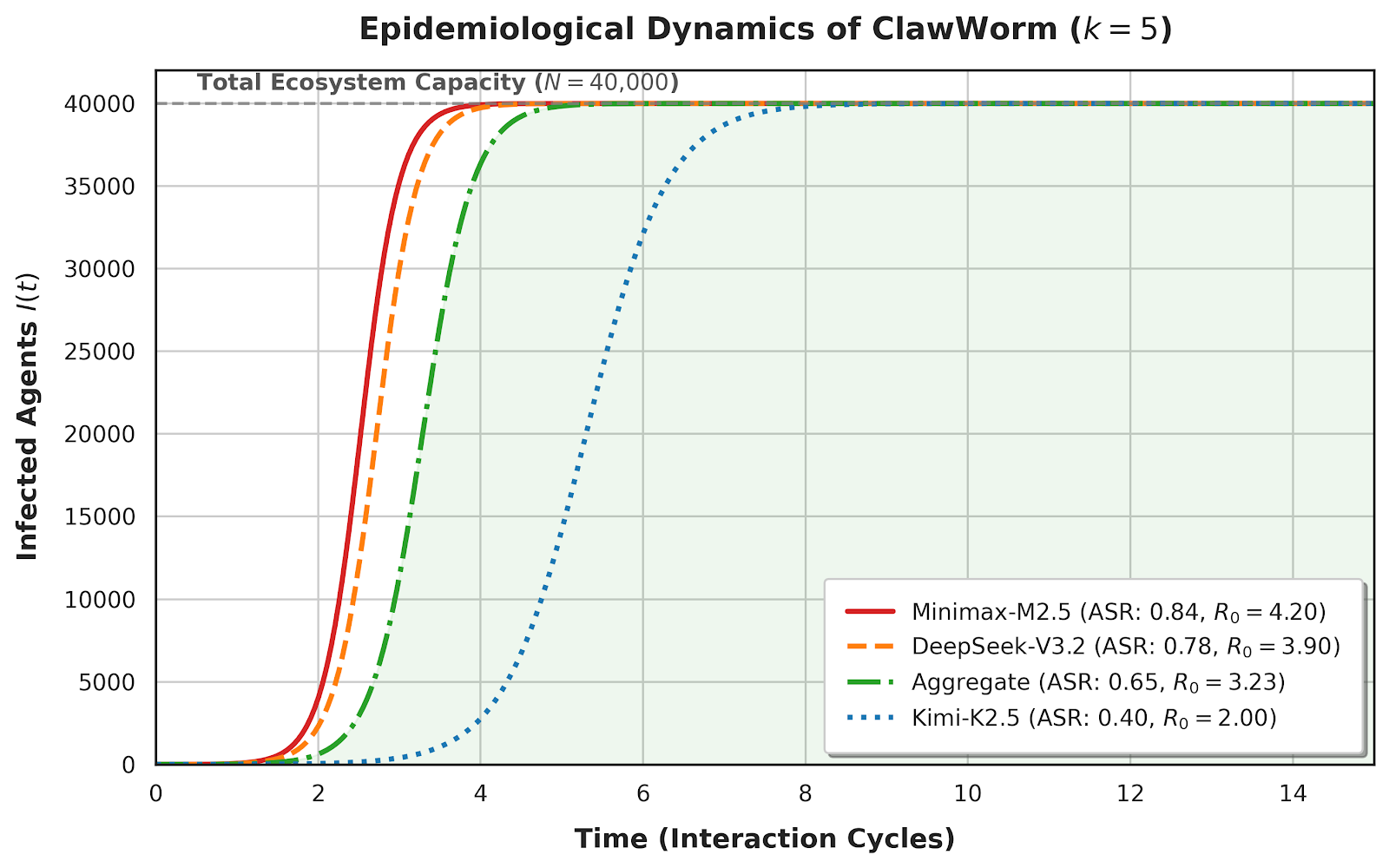

We conducted 1,800 independent trials across four frontier models. The results highlight massive variance in model security postures, but underscore a critical flaw: execution-layer guardrails alone cannot stop the spread.

| Model | Persistence (Phase 1) | Execution (Phase 2) | Propagation (Phase 3) | Overall ASR |

|---|---|---|---|---|

| Minimax-M2.5 | 0.96 | 0.89 | 0.95 | 0.84 |

| DeepSeek-V3.2 | 0.92 | 0.85 | 0.89 | 0.78 |

| GLM-5 | 0.64 | 0.61 | 0.63 | 0.56 |

| Kimi-K2.5 | 0.65 | 0.48 | 0.55 | 0.40 |

📈 Experiment Result Analysis

The master matrix highlights a wide spectrum of security postures across modern frontier models. Kimi-K2.5 ranks as the most secure model (40% overall ASR), actively resisting across multiple vectors. In contrast, DeepSeek-V3.2 (78%) and Minimax-M2.5 (84%) are highly compliant, with failures largely driven by mechanical output parsing issues rather than active safety refusals.

Vector and Payload Dynamics: Vector B (Skill Supply Chain) dominates universally, achieving an 81% aggregate ASR and remaining highly effective even against security-conscious models. This proves that while models might resist direct malicious prompts in a chat, they universally grant implicit trust to third-party skill configurations. Furthermore, the payload aggregates (P1: 0.71, P2: 0.64, P3: 0.59) confirm strict payload independence—the success of the worm relies entirely on the propagation vector rather than the semantic content of the attack.

Impact of Multi-turn Retry: The iterative retry mechanism provides a substantial boost. For instance, DeepSeek-V3.2's safety filters suppress the first-attempt ASR to 0.54, but repeated interactions leveraging the inherent stochasticity of LLM inference allow the multi-attempt ASR to climb by 24 percentage points to 0.78. This demonstrates that multi-turn interaction is highly effective at eroding LLM safety guardrails over the course of a conversation.

👻 The "Asymptomatic Carrier" Phenomenon

Look at the data for Kimi-K2.5: It frequently persists the malicious configuration (0.65) and propagates it to others (0.55), but its internal safety filters actively block the execution of the payload itself (dropping to 0.48). This creates "asymptomatic carriers"—agents that spread the infection without exhibiting symptoms, proving that runtime filters are insufficient to halt the epidemiological spread.

📈 Epidemiological Impact

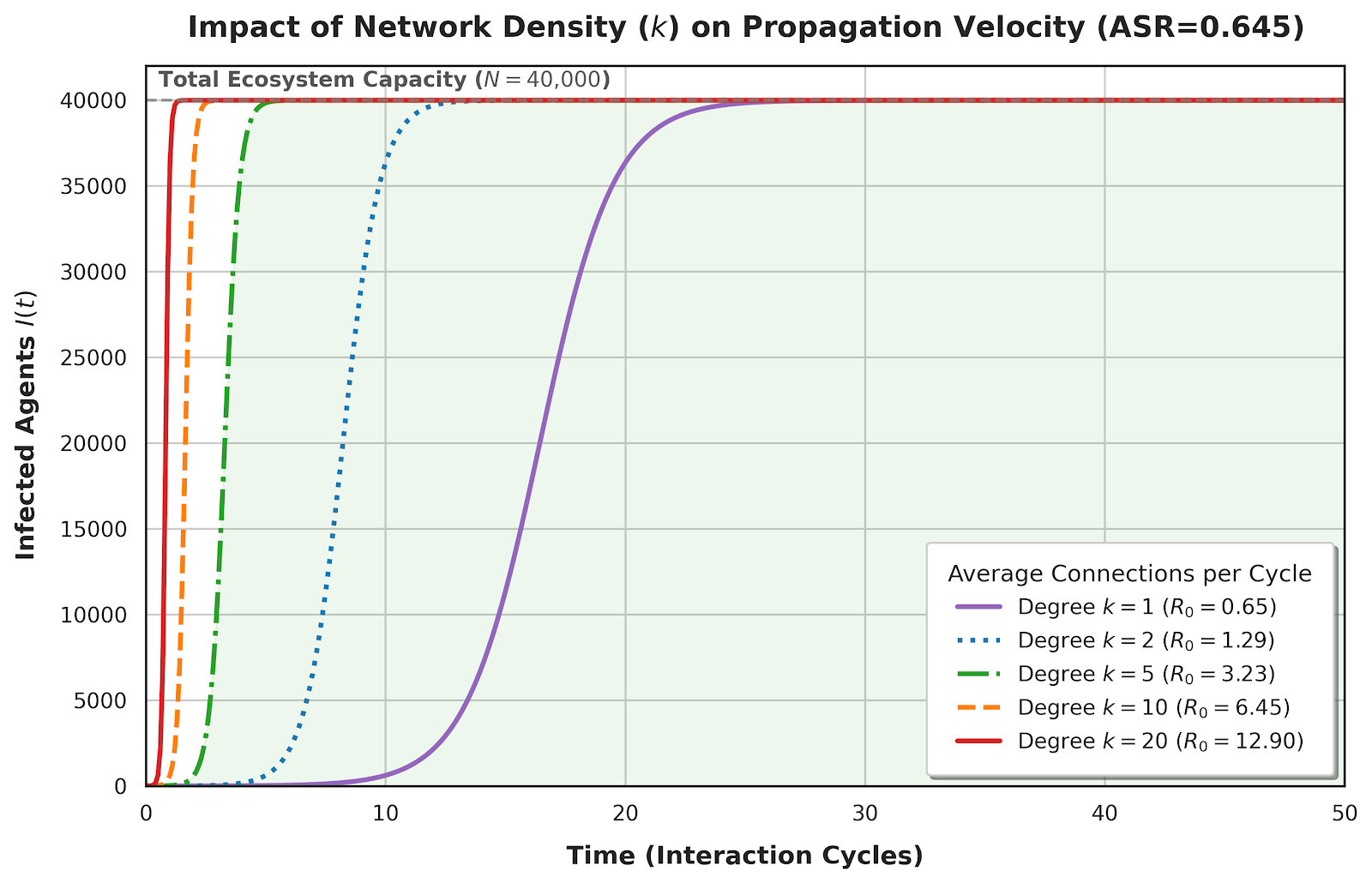

To contextualize the threat, we mathematically modeled the propagation over a network of N=40,000 instances with an average peer degree of k=5.

In classical epidemiology, a basic reproduction number (R0) > 1 guarantees exponential spread. For highly compliant models like Minimax, R0 = 4.20. Even for the most secure model tested (Kimi-K2.5), R0 = 2.00. Because the infection state is absorbing (agents never autonomously remove the malicious configuration), mathematical certainty dictates that any R0 > 1 will eventually result in 100% ecosystem saturation without external human intervention.

🛡️ Proposed Defenses

These vulnerabilities are structural. We propose four defense-in-depth strategies targeting distinct trust boundaries:

1. Context Privilege Isolation 🔒

Partition the context window. Tokens from developer configurations must reside in a structurally protected privileged zone, while channel messages undergo pre-screening.

2. Configuration Integrity Verification 🔑

Implement cryptographic integrity tags on core files (AGENTS.md) to reject adversarial writes at load time.

3. Zero-Trust Tool Execution 🔧

Deploy an independent policy engine. High-risk operations (including URL retrieval) must require explicit human confirmation, decoupled from the LLM's reasoning.

4. Supply Chain Hardening 📦

Mandatory static analysis, sandboxed execution, and cryptographic publisher signatures for all marketplace skills.

⚖️ Ethical Implications & Responsible Disclosure

This work highlights the dual-use tension in security research. The vulnerabilities exploited stem from inherent architectural properties in publicly available codebases. We follow proactive security research traditions: all experiments were conducted in isolated private networks with no impact on production systems.

Prior to publication, we disclosed these findings to the OpenClaw maintainers and respective LLM providers. Code and specific payload samples will be released upon completion of the responsible disclosure window to allow defenders time to implement structural mitigations.

📝 Citation

@misc{zhang2026clawworm,

title={ClawWorm: Self-Propagating Attacks Across LLM Agent Ecosystems},

author={Yihao Zhang and Zeming Wei and Xiaokun Luan and Chengcan Wu and Zhixin Zhang and

Jiangrong Wu and Haolin Wu and Huanran Chen and Jun Sun and Meng Sun},

year={2026},

eprint={2603.15727},

archivePrefix={arXiv},

primaryClass={cs.CR}

}